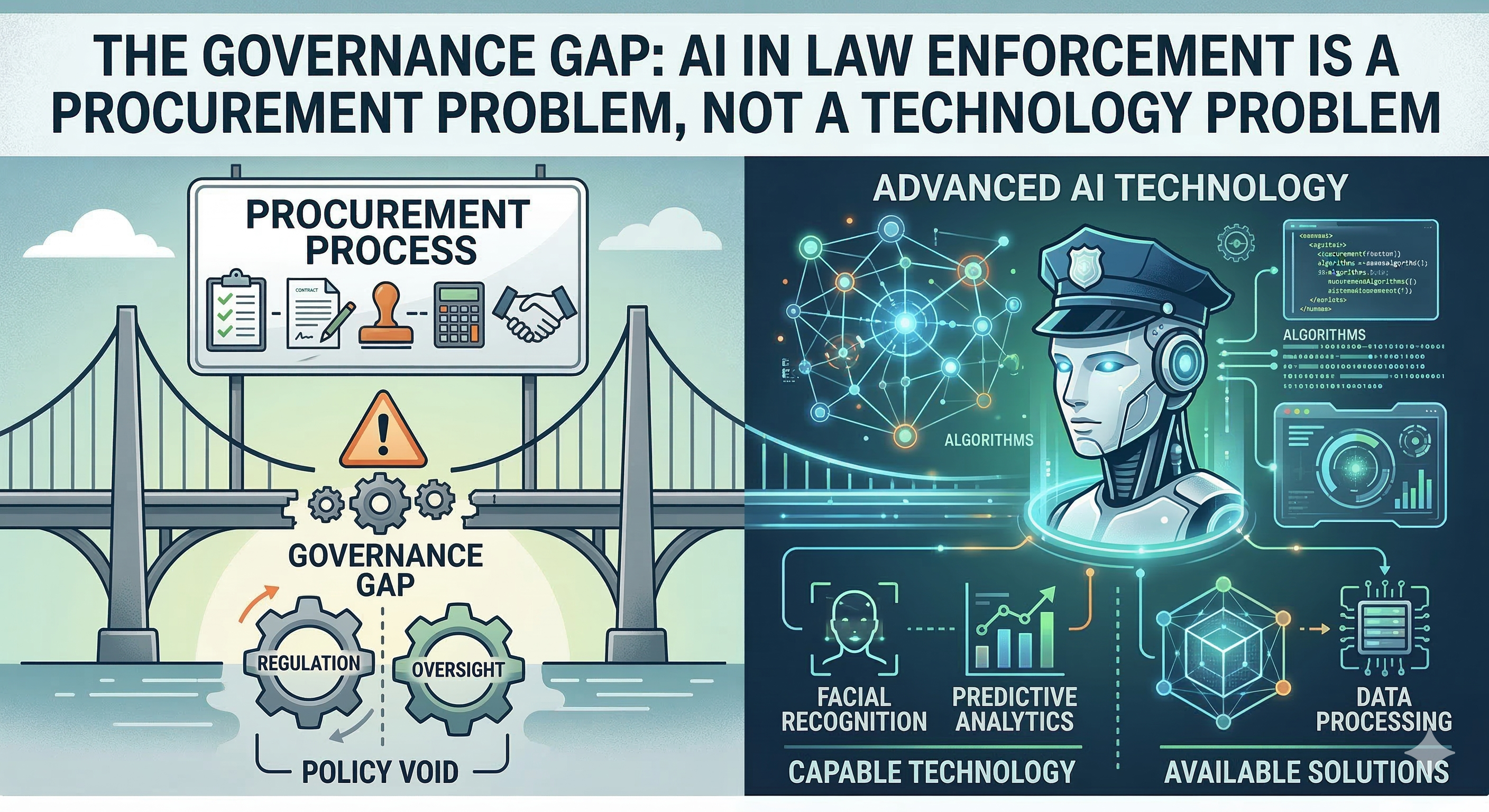

The Governance Gap: AI in Law Enforcement Is a Procurement Problem, Not a Technology Problem

The debate over AI in policing has calcified into two camps: surveillance dystopia versus public safety miracle. Both miss the actual story.

Over the past three years, cities such as Los Angeles, New Orleans, and Pittsburgh have abandoned predictive policing programs amid concerns about accuracy and public backlash. Meanwhile, real-time crime centers’ fusion hubs, which combine camera feeds, license plate readers, and gunshot detection, have quietly expanded to over 100 U.S. cities. The technology isn’t retreating. It’s shape-shifting.

The Procurement Problem

The fundamental issue isn’t whether AI works. It’s whether the agencies buying it can tell the difference.

A RAND Corporation analysis found that most police departments lack the technical expertise to evaluate the tools they procure. Vendor performance claims go unvalidated. Contracts are signed without independent testing protocols, audit requirements, or sunset clauses. Departments are deploying systems they cannot meaningfully assess and, in many cases, cannot even fully describe to their own oversight bodies.

This isn’t a technology problem. It’s an institutional capacity problem wearing a technological costume.

The Evidence Gap

The evidence picture is genuinely mixed, which makes the governance vacuum worse.

NIST’s Face Recognition Technology Evaluation shows that top-performing algorithms have improved dramatically — demographic differentials have narrowed, and the best systems now operate at error rates that would have seemed impossible five years ago. But performance degrades significantly on surveillance-quality imagery: low resolution, poor lighting, off-angle captures. The gap between lab conditions and field deployment remains substantial.

On the oversight side, a GAO investigation found that six federal agencies used Clearview AI’s facial recognition service without ever conducting the privacy impact assessments required by their own policies. A follow-up GAO report documented ongoing civil liberties concerns with federal use of facial recognition — insufficient training, unclear policies on when and how the technology can be deployed, and inadequate tracking of how often it produces actionable leads versus false positives.

The Regulatory Patchwork

Europe decided the question was straightforward enough to legislate. The EU AI Act classifies real-time biometric identification in public spaces as an unacceptable risk, banned outright, with narrow exceptions for serious crime. Predictive policing based on profiling gets the same treatment.

The United States took the opposite approach: nothing binding at the federal level. Executive Order 14110, which established AI safety guidelines including provisions for law enforcement use, was revoked after just fifteen months. The regulatory vacuum persists.

What’s left is a patchwork. Some cities ban facial recognition. Some states require impact assessments. Most jurisdictions have no specific AI governance framework, and the agencies operating in those gaps aren’t waiting for one.

The Shift That Matters

The real trajectory isn’t “more surveillance” or “less surveillance.” It’s a quiet pivot from prediction to processing — from “where will crime happen?” to “how do we handle evidence faster?”

That shift is driven partly by the spectacular failures of predictive policing and partly by genuine operational need. The processing use case has stronger evidence and raises different, though not simpler, governance questions.

But governance hasn’t caught up to either version. Agencies are deploying AI tools with procurement processes designed for something that has never existed. Until institutional capacity matches technological capability, the governance gap will remain the real story, regardless of what the technology can do.